|

Haar- feature Object Detection in C#A description of how it was possible to achieve real- time face detection with some clever ideas back in 2. Contents. Introduction. Background. The simple features. The attentional cascade. The integral image representation. Source code. Using the code. Welcome to Available C#.Net VB.Net & ASP.Net Major Projects & 2013, 2014, 2015.Net based projects. These Project for BTech & MTech Students. Last night I gave you a quick intro into using OpenCV with C#. I showed you how to create a simple video capture application that included a basic canny filter that would transform your captured video into a sketch like image. MCA PROJECTS IN Java, J2ee, asp.net, PHP, VB, C#.NET Project of india.

Points of interest. Conclusion. References. Introduction. In 2. Batch Photo Face Automatically recognize faces in 1000s of photos and process images based on detection results! Home; Products; Batch Photo Face. One of the technologies I like to toy with from time to time is video capture. I like messing around with different eye tracking, head tracking, and pretty much any other kind of motion tracking systems. Hello, I am looking that article: Haar-feature Object Detection in C# I don't understand the classification part. So there are nodes with features, there are threshold on the stage and on the features.

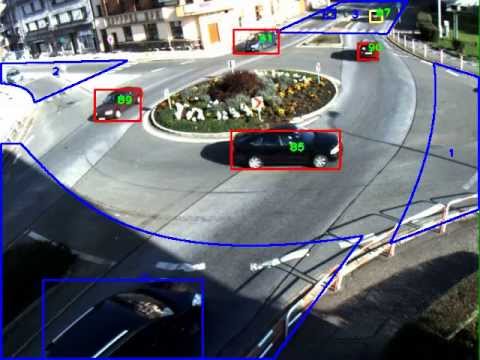

In the first part of this article, we saw how we can initialize our default camera, get image frames from it and carry out face detection. To complete the scope of this article, we would be taking a step.Viola and Jones proposed the first real- time object detection framework. This framework, being able to operate in real- time on 2. And the result everyone knows - face detection is now a default feature for almost every digital camera and cell phone in the market. Even if those devices may not be using their method directly, this now ubiquitous availability of face detecting devices have certainly been influenced by their work. Now, here comes one of the more interesting points of this framework. To locate an object in a large scene, the algorithm simply performs an exhaustive search using a sliding window, using different sizes, aspect ratios, and locations. How come something like this could be so efficient? And this is where the authors' contributions kicks in. This article should present the reader to the Viola- Jones object detection framework, and guide on its implementation inside the Accord. NET Framework. A sample application is provided so interested readers can try the image detection and see how it can be performed using the framework. Background. The contributions brought by Paul Viola and Michael Jones were threefold. First, they focused on creating a classifier based on the combination of several weak classifiers, based on extremely simple features in order to detect a face. Second, they modified a then standard algorithm for combining classifiers to generate classifiers which could even take some time to actually detect a face in a image, but which could reject regions not containing a face extremely rapidly. And third, they used a neat image representation which could effectively pre- compute nearly all costly operations needed for running their classifier at once. The simple features. Most of the time, when one is about to create a classifier, we suddenly have to decide which features to consider. A feature is a characteristic, something which will hopefully bring enough information in the decision process so the classifier can cast its decision. For example, suppose we are trying to create a classifier for distinguishing whether a person is overweight. A direct choice of features would be the person's height and weight. Hair color, for example, would not be a much informative feature in this case. So, let us come back to the features chosen for the Viola- Jones classifier. The features shown below are Haar- like rectangular features. While it is not immediately obvious, what they represent is the differences in intensity (grayscale) between two or more adjacent rectangular areas in the image. For instance, consider if one of those features is placed over an image, such as the Lena S. The value of the feature would be the result of summing all intensity pixels in the white side of the rectangle, summing the pixels in the blue side of the rectangle, and then computing their difference. Hopefully it should be clear by the images on the right side of the sequence why those rectangular features would be effective in detecting a face. Due to the uniformity of shadows in the human face, certain features seems to match it very well. The image above also gives an idea on how the search algorithm works. It starts with either a large (or small) window and scans the image exhaustively (i. When a scan finishes, it shrinks (or grows) this window, repeating the process all over again. The attentional cascade. If the detector wasn't extremely fast, this scheme most likely won't have worked well in real time. The catch is that the detector is extremely fast at discarding unpromising windows. So it can quickly determine if a region does not contains a face. When it isn't very sure about a given region, it spends more time trying to check that it isn't a face. When it finally gives up on trying to reject it, it can only conclude it is a face. So, how the detector does that? It does so by using an attentional cascade. A cascade is a way of combining classifiers in a way that a given classifier is only processed after all other classifiers coming before it have already been processed. In a cascade, the object of interest is only allowed to proceed in the cascade if it has not been discarded by the previous detector. The classification scheme used by the Viola- Jones method is actually a cascade of boosted classifiers. Each stage in the cascade is itself a strong classifier, in the sense it can obtain a really high rejection rate by combining a series of weaker classifiers in some fashion. A weak classifier is a classifier which can operate only marginally better than chance. This means it is only slightly better than flipping a coin and deciding if there is something in the image or not. Nevertheless, it is possible to build a strong classifier by combining the decision of many weak classifiers into a single, weighted decision. This process of combining several weak learners to form a strong learner is called boosting. Learning a classifier like this can be performed, for example, using many of the variants of the Ada. Boost learning algorithm. In the method proposed by Viola and Jones, each weak classifier could at most depend on a single Haar feature. Interestingly enough, therein laid a solution to a untold problem: Viola and Jones had patented their algorithm. So in order to use it commercially, you would have to license if from the authors, possibly paying a fee. As a way to extend the detector, Dr. Rainer Lienhart, the original implementer of the Open. CV Haar feature detector, proposed adding two new types of features and transforming each weak learner into a tree. This later trick, besides helping in the classification, was also sufficient to get out of the patent protection of the original method. Well, so up to now we have a classification system which can be potentially fast at rejecting false positives. However, remember this classifier has to operate on several scaled regions of the image in order to completely scan a scene. Computing differences in intensities would also be quite time consuming (imagine summing a rectangular area again and again, for each feature, and recomputing for each re- scaling). What can be done to make it faster? The integral image representation. Caching. This is often an optimization we perform everyday when coding. Like caching the output of a variable out of a loop instead of recomputing it every time. I think most are familiar with the idea. The idea for making the Haar detection practical was no different. Instead of recomputing sums of rectangles for every feature at every re- scaling, compute all sums in the very beginning and save them for future computations. This can be done by forming a summed area table for the frame being processed, also known as computing its integral image representation. The idea is to compute all possible rectangular areas in the image. Fortunately, this can be done in a single pass over the image using a recurrence formula: or, to put it simple,In an integral image, the area for any rectangular region in the image can be computed by using only 4 array accesses. The picture below may hopefully help in illustrating this point. The blue matrices represent the original images, while the purple ones represent the images after the integral transformation. If we were to compute the shaded area in the first image, we would have had to sum all pixels individually, reaching the answer of 2. Using the integral image, all it is needed is a single access (but this only because we were in the border). In case we are not in the border, all it would require would be at max 4 array accesses, independently of the size of the region; effectively reducing the computational complexity from O(n) to O(1). It will require only two subtractions and one addition to retrieve the sum of the shaded area on the right image, as described in the equation below. Source code. Finally, the source code! Let's begin by presenting a class diagram with the main classes for this application. I am sorry if it is a bit difficult to read, but I tried to keep it as dense as possible so it could fit more or less under 6. You can click it for a larger version, or check the most up- to- date version in the Accord. NET Framework site. Well, so first things first. The exhaustive search explained before (in the introduction) happens in the Haar. Object. Detector. This is the main object detecting class. Its constructor accepts a Haar. Classifier as parameter which will then be used in the object detection procedure. The role of the Haar. Object. Detector is just to scan the image with a sliding window, relocating and re- scaling as necessary, then calling the Haar. Classifier to check if there is or there is not a face in the current region. The classifier, on the other hand, is completely specified by a Haar. Cascade object and its current operating scale. I forgot to say, but the window does not really need to be re- scaled during search. The Haar features are re- scaled instead, which is much more efficient. So, continuing. The Haar. Cascade possesses a series of stages, which should be evaluated sequentially. As soon as a stage in the cascade rejects the window, the classifier stops and returns false. This is best seen by actually checking how the Haar. Classifier runs through the cascade: publicbool Compute(Integral. Image. 2 image, Rectangle rectangle). Math. Sqrt(factor) : 1. Haar. Cascade. Stage stage in cascade. Stages). . And now comes the Classify method of the Haar. Cascade. Stage object. Remember that each stage contains a series of decision trees. All we have to do is then to process the several decision trees, and check if it is higher than a decision threshold. Classify(Integral. Image. 2 image, int x, int y, double factor).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

December 2016

Categories |

RSS Feed

RSS Feed